Companies are spending billions on artificial intelligence. They are hiring data scientists, buying GPU clusters, and licensing the latest large language models. Yet most AI projects never make it past the pilot stage.

The pattern is consistent across industries. A team builds an impressive prototype. Leadership celebrates. Then the initiative quietly stalls, burns budget, or creates a liability nobody anticipated. Industry data from Gartner confirms that roughly 30% of generative AI projects launched in recent years have been abandoned after proof of concept.

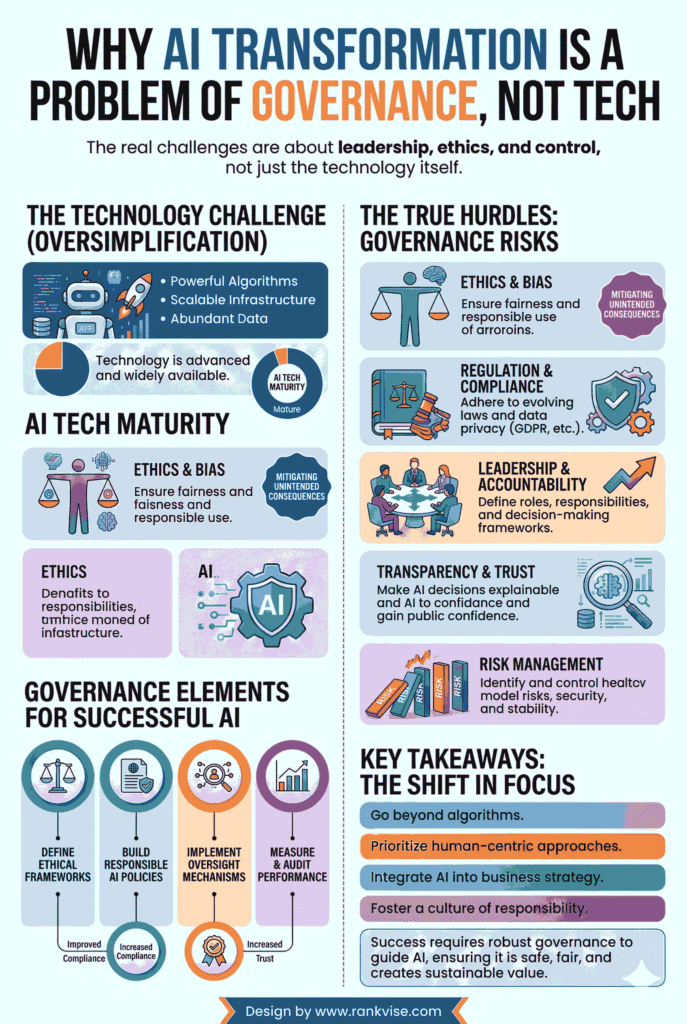

The reason is rarely a broken algorithm. AI transformation is a problem of governance. Organizations fail not because their technology underperforms, but because they lack the structure, oversight, and policies to scale AI responsibly.

What Does AI Governance Actually Mean?

AI governance is the system of rules, roles, and processes that guide how an organization develops, deploys, and monitors artificial intelligence. It covers everything from who approves an AI use case to how the company handles a biased output.

Think of it as the operating manual for AI across your business. Without one, every team makes its own rules. Data scientists build models with no input from legal. Marketing deploys a chatbot with no review from compliance. Finance automates forecasting with no audit trail.

A strong AI governance framework prevents that fragmentation. It aligns AI projects with business goals, regulatory requirements, and ethical standards before a single line of code ships to production.

Why Traditional IT Oversight Falls Short for AI

Most companies already have IT governance. They manage software releases, security patches, and access controls effectively. But AI does not behave like traditional software.

Conventional software is deterministic. The same input always produces the same output. AI systems are probabilistic. They generate responses based on patterns, confidence scores, and training data. That means they can produce different — and sometimes incorrect — answers to the same question.

Three characteristics make AI uniquely difficult to govern:

- AI models can hallucinate, producing plausible but entirely fabricated information that looks authoritative to end users.

- Deep learning systems often operate as black boxes, making it difficult to explain why a specific decision was made.

- Models drift over time as they encounter new data, meaning a system that performed well at launch may degrade without warning.

These traits demand a governance approach built specifically for AI. Applying legacy IT controls to a probabilistic system leaves critical blind spots that traditional risk management was never designed to catch.

The Real Reasons AI Projects Fail

When companies treat AI as a purely technical challenge, predictable failure patterns emerge. Understanding these patterns reveals why ai transformation is a problem of governance at its core.

No Clear Ownership or Accountability

Many organizations launch AI initiatives without defining who is responsible for outcomes. When a hiring algorithm shows bias or a customer-facing chatbot gives harmful advice, leadership scrambles to assign blame. AI accountability must be established before deployment, not after an incident forces the question.

Shadow AI Spreads Unchecked

Research from UpGuard suggests that over 80% of employees in large enterprises now use AI tools that IT has not approved. This shadow AI creates enormous compliance and security gaps. Employees paste sensitive customer data into public AI tools. Teams build workflows around unvetted models. Without a governance policy that addresses shadow AI risks, the organization loses visibility into how AI touches its data and decisions.

Strategy and AI Efforts Are Disconnected

AI projects frequently start in isolated departments with no connection to the company’s broader enterprise AI strategy. A logistics team builds a demand forecasting model while the finance team builds a separate one using different data. The result is duplicated effort, inconsistent outputs, and wasted investment.

Compliance Is Treated as an Afterthought

The regulatory environment for AI is tightening rapidly. The EU AI Act introduces strict documentation, risk classification, and transparency requirements. Organizations that treat AI compliance and regulation as a late-stage checkbox face costly rework or outright project shutdowns when legal realities catch up.

What a Governance-First Approach Looks Like

Shifting from “build first, govern later” to a governance-first mindset does not slow down innovation. It accelerates it by removing the obstacles that cause projects to stall at scale.

Here is what that shift involves in practice:

| Governance Area | What It Covers | Business Impact |

|---|---|---|

| Risk classification | Categorize every AI use case by risk level before development starts | Prevents high-risk projects from launching without proper safeguards |

| Ethical guidelines | Define principles for fairness, transparency, and non-discrimination | Reduces legal exposure and protects brand reputation |

| Data quality standards | Set rules for data sourcing, labeling, lineage, and access | Ensures models produce reliable, auditable outputs |

| Accountability structure | Assign clear ownership for each AI system’s performance and compliance | Eliminates confusion when issues arise post-deployment |

| Continuous monitoring | Track model performance, drift, and bias after launch | Catches degradation before it reaches customers or regulators |

Each of these areas addresses a specific failure point that derails AI initiatives. Together, they form a practical AI governance framework that supports scale rather than blocking it.

How to Build an AI Governance Framework That Works

Building governance does not require a massive bureaucracy. It requires clarity, commitment, and a few essential structures.

Start with a Risk Assessment

Not every AI project carries the same risk. An internal meeting summarizer is low risk. An algorithm that approves loan applications is high risk. Use a framework like the NIST AI Risk Management Framework to classify projects early. This determines the level of oversight each initiative requires.

Create an AI Ethics Charter

Document your organization’s principles for responsible AI use in plain language. Cover topics like data privacy, bias prevention, human oversight requirements, and acceptable use boundaries. This charter becomes the foundation for every team building or deploying AI.

Establish Board-Level Oversight

AI governance cannot live exclusively with the data science team. Responsible AI leadership requires executive sponsorship and board-level visibility. The board should receive regular updates on AI risk exposure, compliance status, and alignment with strategic objectives.

Define Human-in-the-Loop Requirements

For high-stakes decisions — hiring, lending, medical diagnosis, legal analysis — require a human reviewer before any AI recommendation becomes final. This single practice prevents a significant portion of the errors that damage trust and trigger regulatory scrutiny.

Address Shadow AI Directly

Banning unapproved AI tools rarely works. Instead, provide sanctioned alternatives that meet security and compliance standards. Create a simple approval process for new tools. Make it easier to use governed AI than to go around it.

Monitor, Audit, and Adapt

AI risk management is not a one-time project. Schedule regular audits of model performance, data quality, and compliance alignment. Update governance policies as regulations evolve and as your AI portfolio grows. The EU AI Act alone will introduce phased requirements through 2026, meaning static policies will quickly become outdated.

Real-World Consequences of Weak AI Governance

The cost of getting governance wrong is not theoretical. Financial institutions have faced multimillion-dollar fines for deploying biased lending algorithms. Healthcare organizations have pulled AI diagnostic tools after they produced inconsistent results across demographic groups. Retailers have suffered public backlash when AI-generated content offended customers.

In each case, the technology functioned as designed. The governance around it did not. These examples reinforce that ai transformation is a problem of governance because the technology will do exactly what you allow it to do — including things you never intended.

The Competitive Advantage of Governing AI Well

Organizations that invest in governance early gain three measurable advantages.

First, they scale faster. Projects move from pilot to production with fewer delays because compliance, ethics, and risk have already been addressed.

Second, they reduce cost. Governed AI projects avoid the expensive rework, legal disputes, and reputational damage that plague ungoverned ones. IBM research indicates that only 16% of AI initiatives reach enterprise-wide scale — governance is the primary differentiator for those that do.

Third, they build trust. Customers, regulators, and partners increasingly evaluate companies on how responsibly they use AI. A visible commitment to governance signals maturity and reliability that competitors without frameworks cannot match.

What Comes Next for AI Governance

The governance landscape will continue to evolve. Expect tighter regulations in the United States to follow the EU’s lead. Expect industry-specific standards to emerge in healthcare, financial services, and education. And expect AI governance tools — automated compliance monitoring, bias detection platforms, and model audit systems — to mature rapidly.

But tools alone will not solve the challenge. AI transformation is a problem of governance because it ultimately depends on people, culture, and leadership commitment. The organizations that treat governance as a strategic enabler — rather than a bureaucratic obstacle — will be the ones that turn AI investment into lasting competitive advantage.

FAQs

Most AI projects fail due to missing governance structures, unclear ownership, and poor alignment with business strategy — not because the underlying technology is flawed.

Shadow AI refers to employees using unapproved AI tools without IT oversight. It creates data security gaps, compliance violations, and uncontrolled risk exposure across the organization.

The EU AI Act classifies AI systems by risk level and imposes strict transparency, documentation, and accountability requirements. Any company deploying AI that impacts EU citizens must comply.

The board should provide executive oversight, review AI risk exposure regularly, and ensure that AI initiatives align with the organization’s strategic goals and ethical commitments.

No. Software supports governance by automating monitoring and compliance tasks, but it cannot replace the leadership decisions, ethical principles, and organizational culture that a governance strategy requires.